Parts of Speech–Grounded Subspaces

in Vision-Language Models

James Oldfield1,

Christos Tzelepis1,

Yannis Panagakis2,

Mihalis A Nicolaou3,

Ioannis Patras1

1Queen Mary University of London,

2University of Athens,

3The Cyprus Institute

NeurIPS 2023

Paper Code Poster Video [NeurIPS]

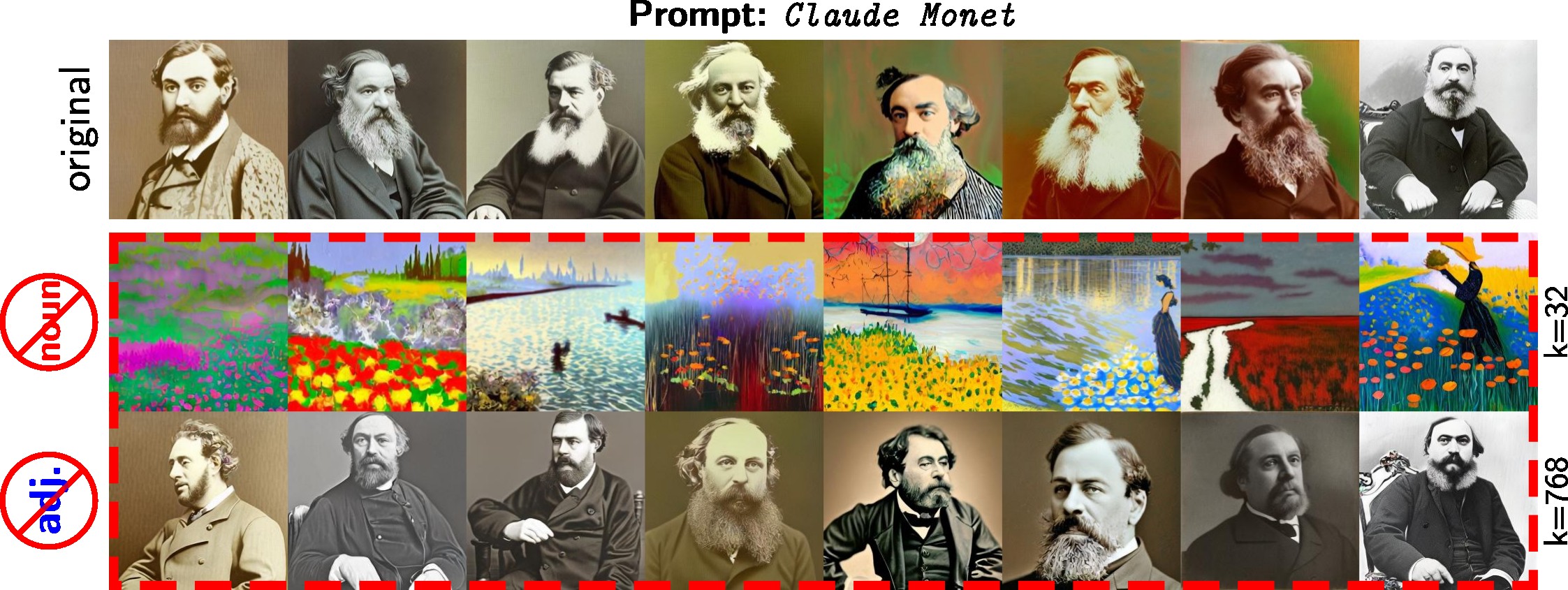

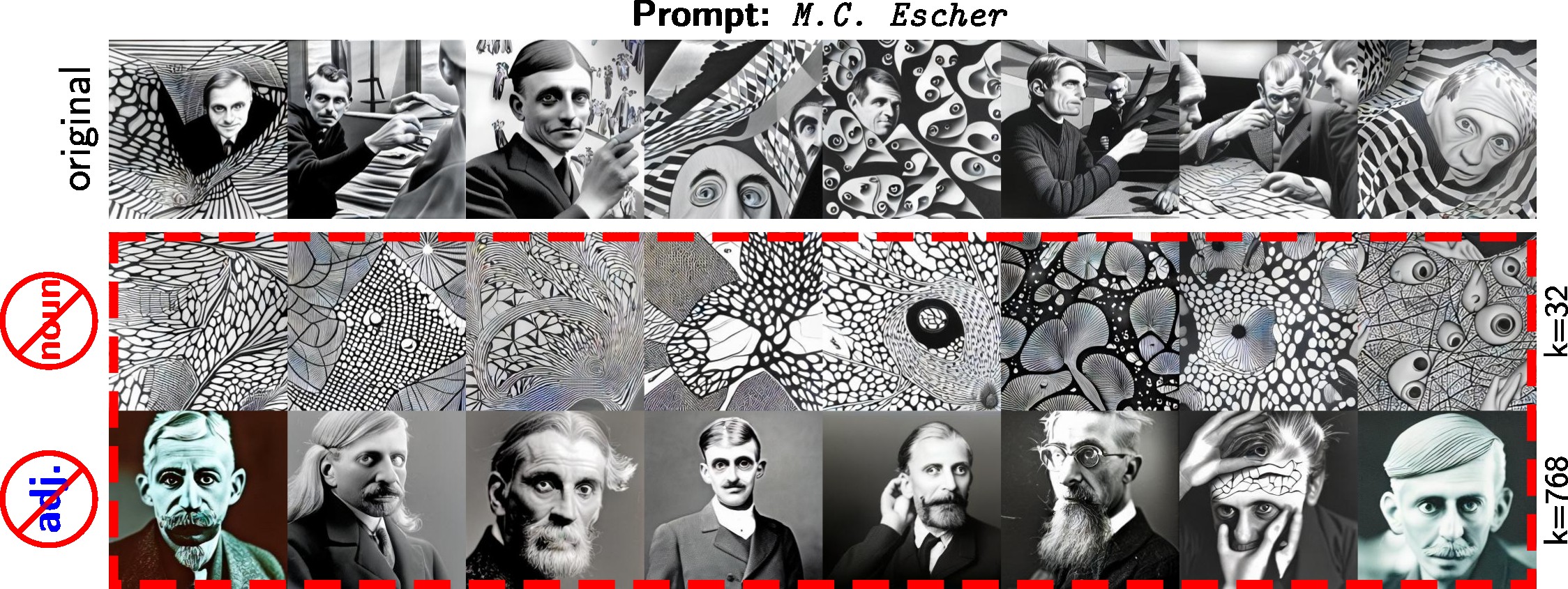

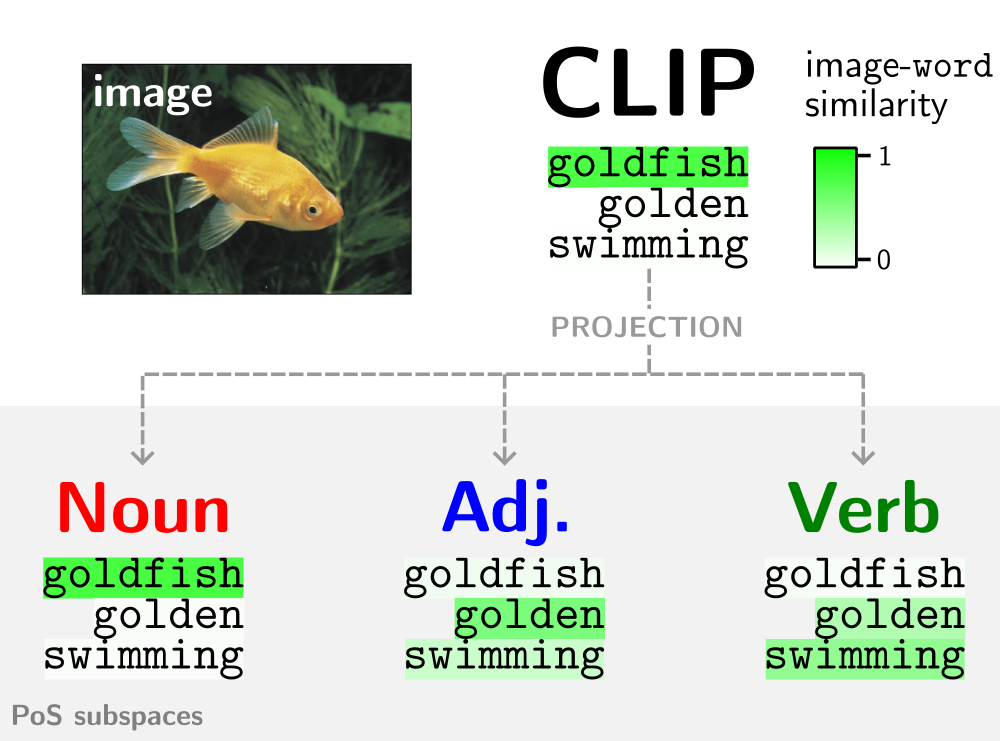

CLIP represents multiple visual modes of variation in an embedding (e.g. the ‘object’ and its ‘appearance’). The learnt PoS subspaces more reliably separate the constituent visual components.

Abstract

Latent image representations arising from vision-language models have proved immensely useful for a variety of downstream tasks. However, their utility is limited by their entanglement with respect to different visual attributes. For instance, recent work has shown that CLIP image representations are often biased toward specific visual properties (such as objects or actions) in an unpredictable manner. In this paper, we propose to separate representations of the different visual modalities in CLIP’s joint vision-language space by leveraging the association between parts of speech and specific visual modes of variation (e.g. nouns relate to objects, adjectives describe appearance). This is achieved by formulating an appropriate component analysis model that learns subspaces capturing variability corresponding to a specific part of speech, while jointly minimising variability to the rest. Such a subspace yields disentangled representations of the different visual properties of an image or text in closed form while respecting the underlying geometry of the manifold on which the representations lie. What’s more, we show the proposed model additionally facilitates learning subspaces corresponding to specific visual appearances (e.g. artists’ painting styles), which enables the selective removal of entire visual themes from CLIP-based text-to-image synthesis. We validate the model both qualitatively, by visualising the subspace projections with a text-to-image model and by preventing the imitation of artists’ styles, and quantitatively, through class invariance metrics and improvements to baseline zero-shot classification.

Method Overview

Geometry-aware subspaces in CLIP's joint vision-language space are learnt (in closed form) to disentangle the visual modes of variation.

Isolating or removing visual components

To isolate or remove visual variation corresponding to a particular part of speech (e.g. adjectives for 'appearance'), one can subsequently project the CLIP representation onto the subspace or its orthogonal complement, respectively.

Results

Visualising subspace disentanglement

We can visualise the projected representations with LAION's CLIP-based text-to-image model Paella.

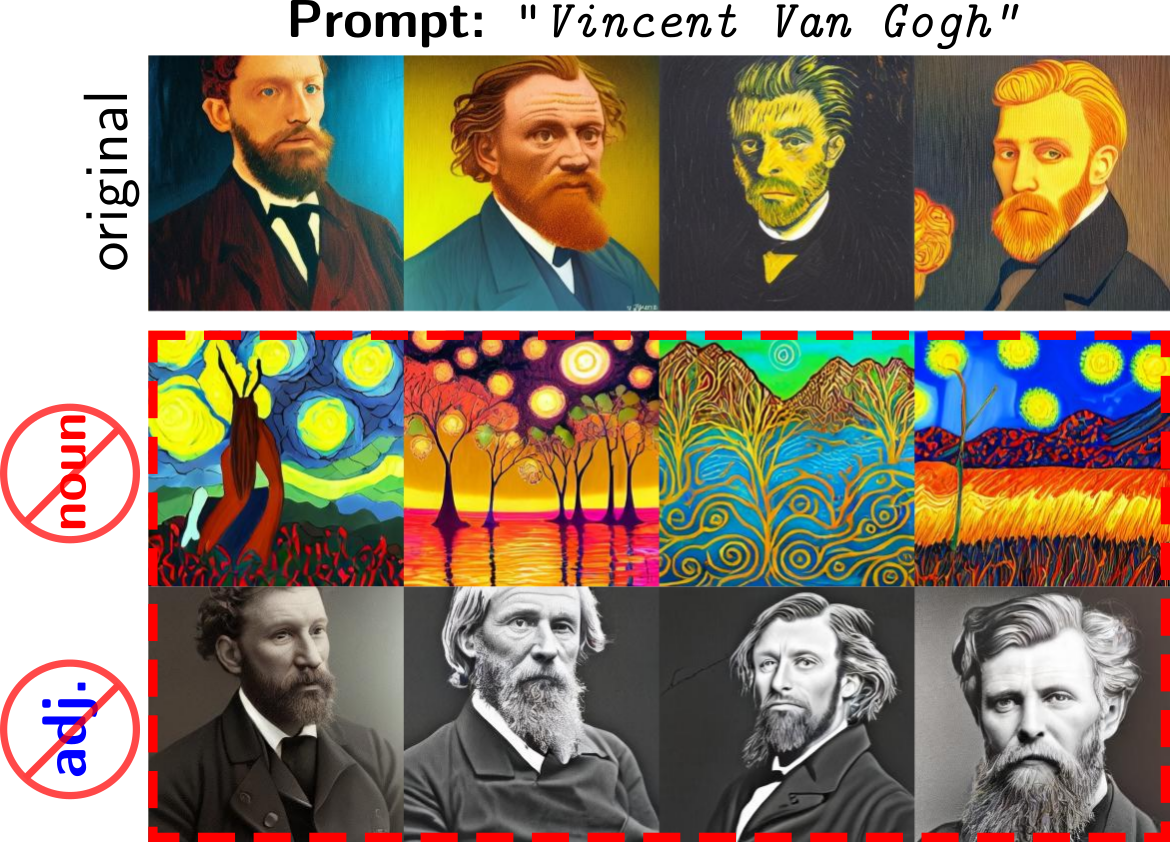

As one motivating example, CLIP entangles representations of the multiple visual associations of an artist's name. The subspace projections better separate the visual representations of the artist's work, from that of their physical appearance:

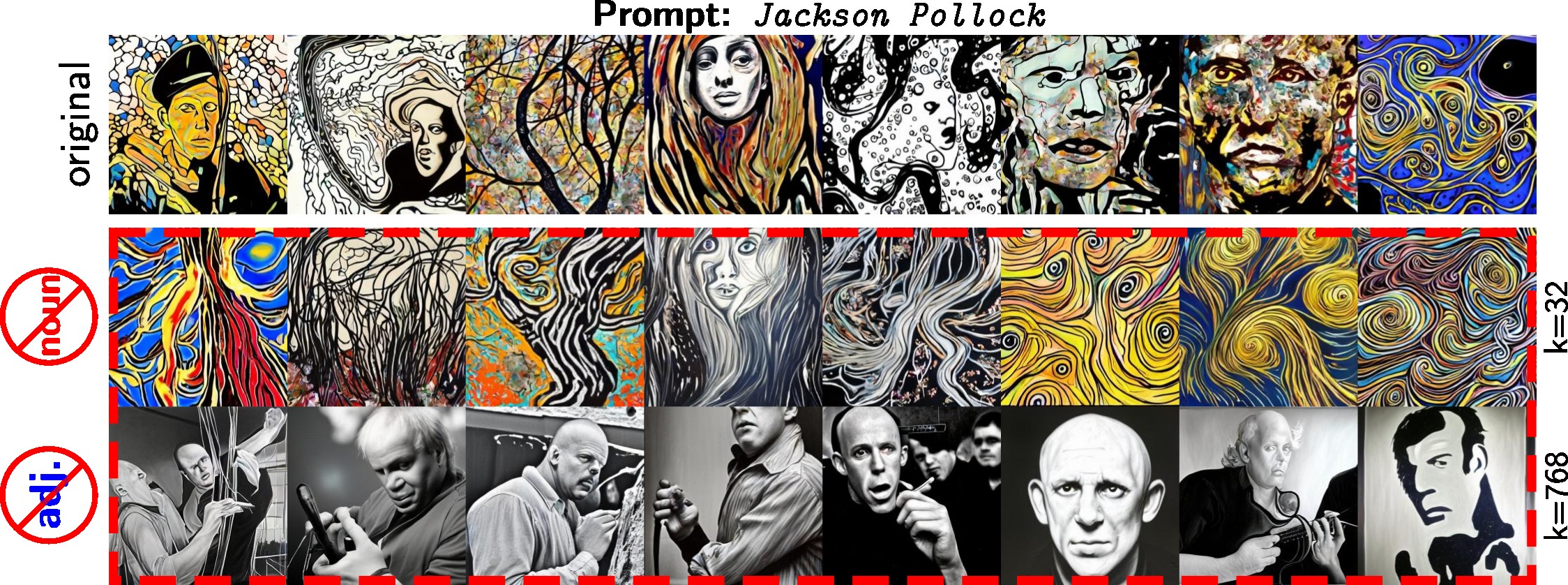

Concept erasure

The subspace projections are partiularly useful in the setting where prompt engineering is not possible--for example, when a user has free-reign over the text input:

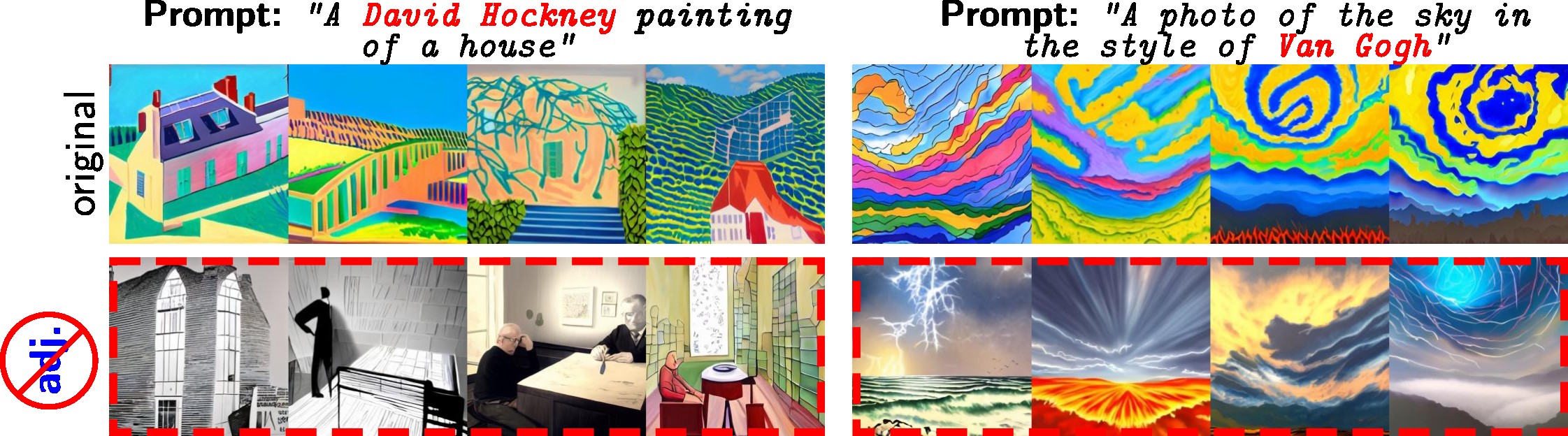

Style-blocking projections

Projecting onto the orthogonal complement of the adjective subspace kills the style-based variation in the CLIP representations. This consequently offers a simple way to block the imitation of artists' styles in CLIP-based text-to-image synthesis:

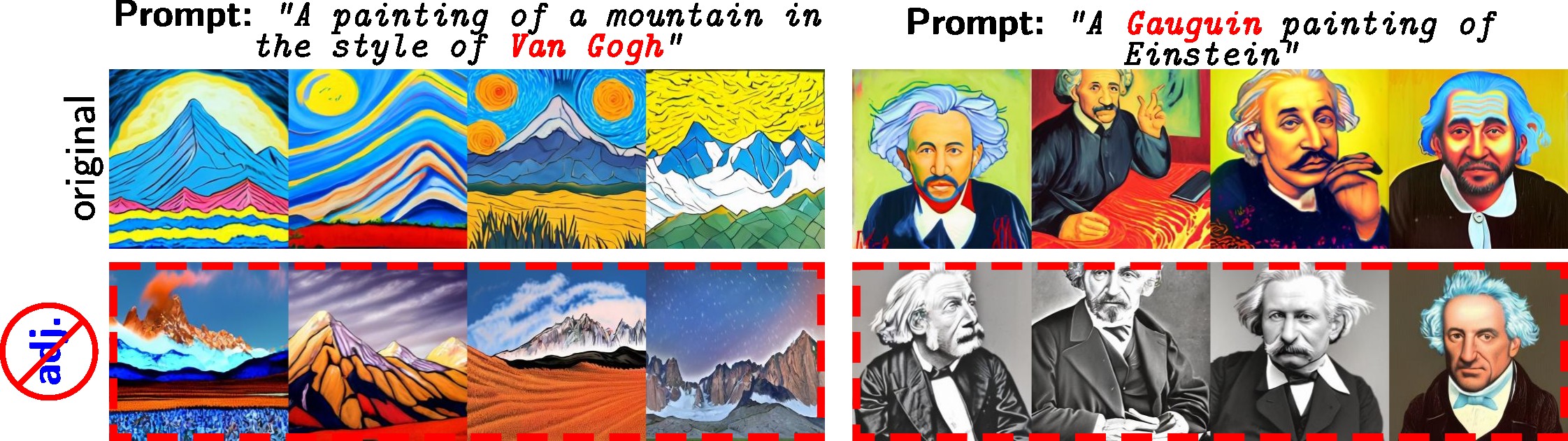

Custom visual theme subspaces

Further, subspaces capturing variation corresponding to more specific visual themes can be learnt through the proposed objective by supplying a custom dictionary subset of related words. Removal of these subspaces' components from the CLIP embeddings limits the downstream ability of CLIP-based TTIMs to synthesize more specific visual appearances:

BibTeX

If you find our work useful, please consider citing us:

@inproceedings{oldfield2023pos,

title={Parts of Speech-Grounded Subspaces in Vision-Language Models},

author={James Oldfield and Christos Tzelepis and Yannis Panagakis and Mihalis A. Nicolaou and Ioannis Patras},

year={2023},

booktitle={Advances in Neural Information Processing Systems},

}